People process what they have learned primarily at night, when certain mechanisms of action take place in the brain during the deep sleep phase. As the trade journal "Entwicklung & Elektronik" (E&E) reports (link in German), researchers at TU Ilmenau have now transferred these processes to artificial neural networks to make "machine learning" more efficient.

"Scientists have already frequently developed new methods based on observations from nature," write the researchers led by Prof. Patrick Mäder from TU Ilmenau in the preface to their study, for which they were able to transfer the biological process of learning to artificial neuronal networks and thus increase their performance.

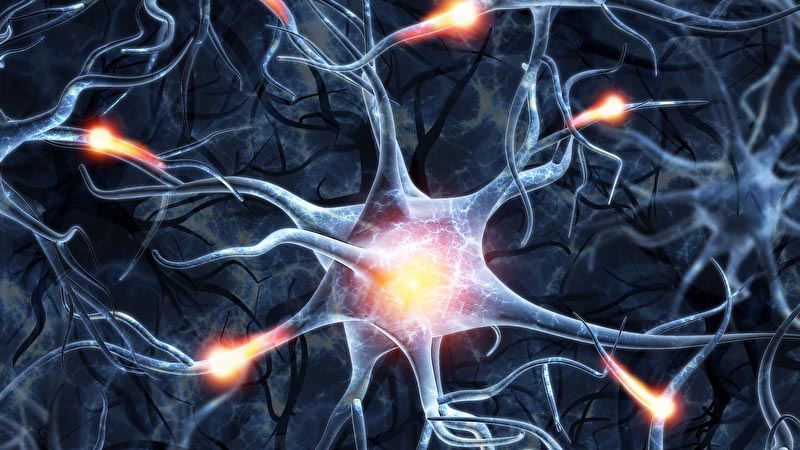

For background: According to "E&E", sleep researchers have only known for a few years how nocturnal learning works. These findings were based on the observation that synapses not only actively learn during waking phases - but also amplify or reduce chemical or electrical signals from neurons. Thus, synapses not only transmit signals from neuron to neuron, but also amplify or attenuate their intensity. "In this way, neurons are enabled to absorb and adapt to the changing influences of the environment," the science journalists explain. During sleep, this state of arousal returns to normal, and the nervous system can process the new information absorbed during the waking phase in memory and consolidate it by forgetting random or unimportant information. At the same time, it becomes more sensitive to the reception of new information.

Prof. Patrick Mäder, head of the Department of Software Engineering for Safety-Critical Systems at TU Ilmenau, built on this process, called synaptic plasticity: "Synaptic plasticity is responsible for the function and performance of our brain and thus the basis of learning," he is quoted as saying in "E&E": "If the synapses always remained in an activated state, this would ultimately make learning more difficult, as we know from animal experiments. Only the recovery phase during sleep makes it possible for us to retain what we have learned in memory."

The ability of the synaptic system to react dynamically to different stimuli and to keep the nervous system stable has now been imitated by the researchers in artificial neuronal networks. With the help of so-called synaptic scaling, they transferred the mechanisms that regulate the brain to machine learning methods - with the result that the artificial neuronal models behave similarly effectively to their natural model.

Text: Ingo Schenk

Most popular